Beyond the Chat Box: Why a Q&A feed isn't engagement

The first post in a series on what live audience engagement actually looks like when there's an AI team in the room.

The first post in a series on what live audience engagement actually looks like when there's an AI team in the room.

Here's a familiar scene.

You're running a town hall. 400 people on the call. The Q&A panel is filling up. Someone asks the same question three different ways. Someone else writes a paragraph that's really three questions stacked. A few jokes. Two complaints. A thoughtful question that deserves a real answer is sitting at position 47, where it will quietly die.

Your moderator is reading as fast as they can. They're upvoting, clustering in their head, copy-pasting the good ones into a Slack DM with the CEO so she can pick three to read aloud. The clock is running. The CEO answers four questions. The session ends. The other 73 questions sit in a CSV that nobody will ever open.

We call this audience engagement.

It isn't.

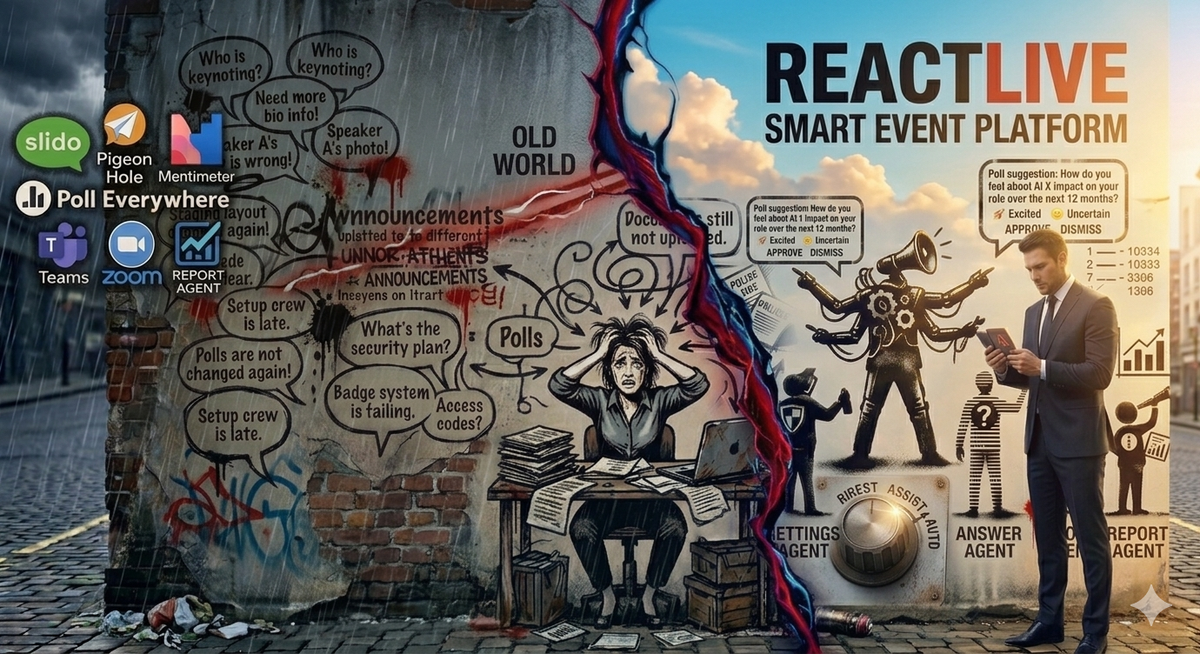

What we've actually been doing for a decade

The chat box — and its slightly fancier cousin, the Q&A panel — has been the default unit of "engagement" since Slido shipped in 2012. The shape is always the same: a feed of submissions, a couple of upvote arrows, maybe a poll button, a moderator trying to triage in real time, and an event host trying to look like they're listening.

It's a backlog with branding.

The problem isn't the tools. Slido, Mentimeter, Zoom Q&A, the chat — they all do exactly what they say. They collect input. The problem is that collecting input and engaging an audience aren't the same thing, and we've been pretending they are because that was the best technology we had.

Engagement isn't questions going in. Engagement is answers coming out. In real time. To the right people. Grounded in what was actually said.

That's the loop. Most events don't close it. They can't. The math doesn't work — one moderator, one host, hundreds of submissions, sixty minutes. Something has to give, and what gives is the audience's experience of being heard.

What "closing the loop" actually requires

Think about what would have to be true for a 400-person town hall to feel like everyone got an answer.

Someone — or something — would have to:

- Read every question as it comes in

- Recognise that questions 12, 47, and 103 are the same question

- Notice that question 28 was already answered by the speaker two minutes ago and surface that answer

- Catch the one question that's actually a complaint about a specific manager and route it for handling, not broadcast

- Know which questions are the right ones for the host to take live, and which ones can be answered privately right now

- Spot the moment the room's mood shifts and offer a poll that captures it

- Write up what happened, what was unanswered, and what to follow up on — before the host has even closed their laptop

No human moderator can do that for an audience of 400. We've all been pretending otherwise. We've been calling the gap between "questions submitted" and "questions answered" the nature of live events, when really it's just a technology gap.

That gap is what this series is about.

The thesis

ReactLive is built around a single idea: a live event should answer its audience, not just record them.

Not by replacing the moderator. Not by putting a chatbot in the corner that hallucinates answers no one trusts. By giving the moderator a team — five specialist agents that handle the work no human can do at speed and at scale, all coordinated by an orchestrator that knows the tone, the red lines, and the timeline of the event.

- A Setup Agent that turns an agenda and a deck into a configured event in 90 seconds.

- A Protect Agent that handles abuse and spam at machine speed so anonymous Q&A is finally safe to run.

- An Engage Agent that clusters questions, ranks priority, and reads the room.

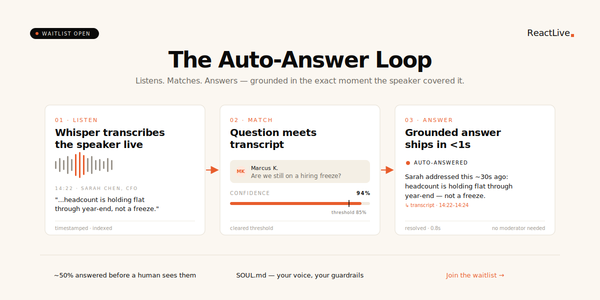

- An Answer Agent that closes the loop — answering grounded questions in real time from your trusted sources only, and escalating the rest.

- A Report Agent that turns the event into insight before the next meeting starts.

Each one has four strength levels: Off, Suggest, Assist, Auto. You decide how much trust to extend, agent by agent, event by event.

That's the team. The rest of this series is about what each of them changes.

What's coming in this series

Over the next eleven posts, we'll go deep on each piece:

- Why we split into five agents instead of one big AI

- How the Off → Suggest → Assist → Auto dial makes AI moderation actually trustworthy

- What each of the five agents does and what it changes about how events feel

- How SOUL.md gives an AI a personality without prompt engineering

- What happens when you turn all the agents off (spoiler: ReactLive is still a great event platform)

- And what we learned dogfooding all of this at our own town halls

If you've ever run a live event and walked away thinking we could have done so much more with what people gave us — this series is for you.

The chat box did its job. It's time to go beyond it.

Next up: Five agents are better than one big AI. Why ReactLive splits the work — and why a single model can't give you the control event hosts actually need.

Join the waitlist to get early access. Three free events. Locked-in pricing.