Beyond the Chat Box: Five agents are better than one big AI

Post two of a series on what live audience engagement actually looks like when there's an AI team in the room.

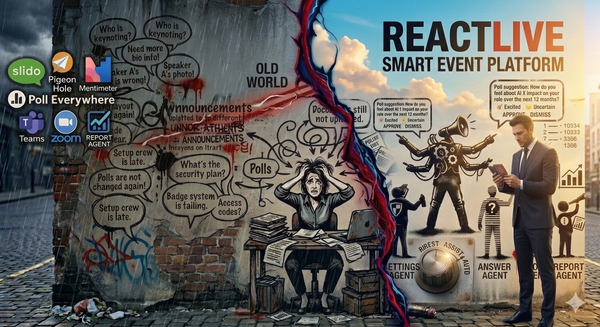

When we started building ReactLive, the obvious thing to do was put one big AI in charge of the event.

One model. One prompt. Feed it the agenda, the slides, the live transcript, the Q&A feed, the moderation rules, the brand guidelines, the audience signals — and ask it to handle everything. Setup. Moderation. Answers. Engagement. Reporting. The whole event, end to end.

It would have been faster to build. It would have demoed beautifully. And it would have been wrong.

Here's why.

The control problem

Imagine you're running an all-hands the morning after earnings. The CFO is on stage. Guidance was revised. Analysts are already publishing takes. Internally, employees are reading the headlines and submitting questions in real time — about the share price, about hiring, about what the revised numbers mean for their team.

You want the AI to aggressively moderate anything that looks like it's leaking material non-public information — auto-hold those submissions, no human needed, no waiting. The legal team's nightmare is a forward-looking statement appearing in the public Q&A feed before anyone catches it.

You also want the AI to suggest answers, not publish them. Not because the answers might be wrong — they might be exactly right — but because in a window this regulated, every AI-generated answer needs a human (and probably a lawyer) to read it before 4,000 employees see it.

Now: tell one big AI to do both of those things at the same time, with one prompt, in one model.

You can't. Not really. You end up with a long, brittle prompt full of conditionals — be aggressive on moderation but conservative on answers, except when the question is about the product roadmap in which case be a bit more forward, except if it mentions a specific number that wasn't in the deck in which case escalate, except… — and a single confidence threshold that has to serve every kind of action the AI takes.

What you actually need is two different policies, applied to two different jobs, with two different risk tolerances. One agent set to Auto. Another set to Suggest. No prompt gymnastics required.

That's the case for splitting the work.

What we did instead

ReactLive runs five specialist agents, coordinated by an Orchestrator:

- Setup Agent — prepares the event before it starts

- Protect Agent — handles moderation in real time

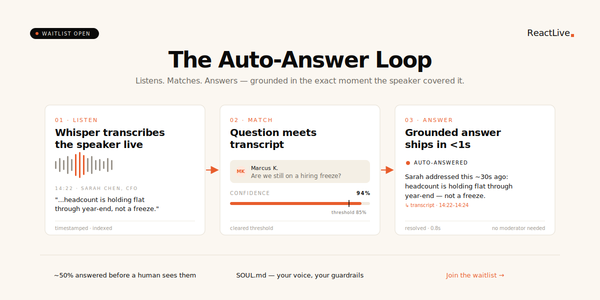

- Engage Agent — clusters questions, ranks priority, drives interaction

- Answer Agent — closes the loop with grounded answers

- Report Agent — turns the event into insight after it ends

Each one has its own job, its own data, its own rules, and its own dial. The Orchestrator reads a single SOUL.md to understand how the event should feel — voice, vocabulary, red lines, escalation rules — then routes context between the specialists and decides who acts when.

Five agents. One conductor. Three phases of the event covered end to end.

This isn't an architectural flex. It's the only way to give event hosts the control they actually need.

Why specialisation is control

Three reasons specifically.

One job, one set of data, one set of rules. The Protect Agent only sees submissions and policies. The Answer Agent only sees the transcript, the documents you uploaded, and prior Q&A. The Engage Agent sees engagement signals and topic shifts. Each agent's behaviour is reasoned about independently — what does Protect do when it sees this? is a question with a clean answer, because Protect doesn't also have to be thinking about whether to publish a poll.

One dial per agent. Every agent has four strength levels: Off, Suggest, Assist, Auto. Set Protect to Auto on day one because moderation is high-stakes and you want speed. Set Answer to Suggest for the first three events because you want a human reading every AI-generated answer before it goes out. Set Engage to Assist so it auto-publishes the safe stuff and asks for approval on the rest. You can't do that with one model — turning a single AI's "aggression" up affects everything it does, not just the one thing you wanted to change.

Failure is contained. If the Answer Agent is being too cautious, that's a problem with one agent. If it were one big AI doing everything, "too cautious about answers" might also mean "too cautious about flagging spam" or "missing topic shifts." Bugs would couple. Tuning one behaviour would silently change five others. With specialists, you can fix one agent without touching the others.

What the Orchestrator actually does

If five agents are doing the work, you need something coordinating them. That's the Orchestrator — what we sometimes call the Conductor, or the Boss.

It does three things:

It reads SOUL.md. The same single file that defines the event's tone, voice, red lines, and escalation rules is read by the Orchestrator and propagated to every specialist. The Answer Agent's tone matches the Engage Agent's tone matches the Protect Agent's escalation messages. One source of truth.

It routes context between specialists. When the Engage Agent clusters three duplicate questions, the Orchestrator passes that cluster to the Answer Agent so it doesn't generate the same response three times. When the Protect Agent flags a submission as borderline, the Orchestrator holds it back from Engage's priority ranking until a human decides. The agents don't talk directly to each other — the Orchestrator owns the timeline.

It escalates anything outside its remit to the human moderator. This is the part that matters most. The Orchestrator is not the boss of your moderator. It's the boss of the agents. When something falls outside what any agent is trusted to handle — at the level you set — the human gets it.

A common worry we hear: does the Orchestrator override my moderator? No. Auto means the agent doesn't wait for approval. It does not mean the human can't intervene. The moderator can override, edit, or withhold anything any agent proposes, at any strength level, at any time.

The "but isn't this overengineered?" objection

We've heard this one too. Five agents sounds like a lot. Wouldn't one good model and a thoughtful prompt do the same thing?

For a demo, sure. For a real event with real stakes, no — and here's the test.

Ask yourself: can I dial moderation up to fully autonomous while keeping AI answers in suggest-only?

If your tool is one big AI behind a chat interface, the honest answer is "kind of, with a long prompt, and the behaviour will drift." If your tool has separate Protect and Answer agents with independent strength levels, the answer is "yes, set Protect to Auto and Answer to Suggest, save the preset, done."

That's the difference between a clever demo and a system event hosts can actually trust with their highest-stakes events.

The shape of trust

There's a deeper point here, and it's worth saying directly.

Event hosts don't trust AI yet. Not because the technology isn't capable — it clearly is — but because the way most AI products are packaged is all or nothing. Either the AI runs the thing or it doesn't. Either you trust the chatbot completely or you turn it off.

Real trust isn't built that way. Real trust is built one job at a time. I trust this person to triage spam, but not yet to write answers in my voice. I trust this person to summarise the meeting, but not yet to decide who speaks next. Granular. Earned. Per-task.

That's the model ReactLive is built around. Five agents, four strength levels each, one dial at a time.

The next post in this series goes deep on those four strength levels — Off, Suggest, Assist, Auto — and why that dial is the thing that makes AI moderation actually trustworthy in production.

Specialisation gives you the agents. The dial is what gives you the control.

Next up: Off, Suggest, Assist, Auto — the dial that makes AI moderators trustworthy. A walk through the four strength levels every ReactLive agent ships with, and why earning trust is something you do per agent, per event.

Join the waitlist to get early access. Three free events. Locked-in pricing.